Code Judges

Score your agent behavior using code and LLMs.

judgeval provides abstractions to implement judges arbitrarily in code, enabling full flexibility in your scoring logic and use cases.

You can use any combination of code, custom LLMs as a judge, or library dependencies.

Implement a Code Judge

Create a Code Judge by inheriting from Judge and implementing the score method. You must specify a response type as a generic parameter. For binary and numeric results use BinaryResponse or NumericResponse directly. For categorical results, define a subclass of CategoricalResponse that declares the valid categories, then use that subclass as the generic parameter.

The score method receives an Example object, which can optionally carry a .trace for accessing trace data.

Additionally, judgeval allows you to upload scorers consisting of multiple files. Ensure that your entrypoint file contains the scorer class definition.

Create your judge file

You can use the CLI to generate skeleton code for your judge:

judgeval scorer init --response-type binary --name ResolutionScorerThis creates a resolution_scorer.py file with the appropriate boilerplate. Use --response-type to select binary, categorical, or numeric.

CLI Options

--response-type, -trequired

:strResponse type for the judge: binary, categorical, or numeric.

--name, -nrequired

:strClass name for the generated judge (must be a valid Python identifier).

--init-path, -p

:strDirectory to write the generated file to. Defaults to the current directory.

--include-requirements, -r

:flagAlso create an empty requirements.txt file in the same directory.

--yes, -y

:flagIf you prefer to write it manually, here's an example:

from judgeval.judges import Judge, BinaryResponse

from judgeval.data import Example

class ResolutionScorer(Judge[BinaryResponse]):

async def score(self, data: Example) -> BinaryResponse:

actual_output = data.get_property("actual_output")

if "package" in actual_output:

return BinaryResponse(

value=True,

reason="The response contains package information."

)

return BinaryResponse(

value=False,

reason="The response does not contain package information."

)The score method:

- Takes an Example as input

- Can access trace data via

data.traceanddata.trace.spanswhen available - Returns one of three response types:

BinaryResponse: For true/false evaluations with avalue(bool) andreason(string)CategoricalResponse: For categorical evaluations — must be subclassed with acategoriesclass variable; see belowNumericResponse: For numeric evaluations with avalue(float) andreason(string)

- All response types support optional

citationsto reference specific spans in your trace

Here's an example that uses trace data to score based on span information:

from judgeval.judges import Judge, NumericResponse

from judgeval.data import Example

class ToolCallScorer(Judge[NumericResponse]):

async def score(self, data: Example) -> NumericResponse:

if not data.trace or not data.trace.spans:

return NumericResponse(

value=0.0,

reason="No trace data available."

)

tool_calls = [span for span in data.trace.spans if span.get("span_kind") == "tool"]

return NumericResponse(

value=float(len(tool_calls)),

reason=f"Agent made {len(tool_calls)} tool call(s)."

)For categorical scoring, first define a CategoricalResponse subclass that lists the valid categories using Category, then use it as the generic parameter:

from judgeval.judges import Judge, CategoricalResponse

from judgeval.hosted.responses import Category

from judgeval.data import Example

class ResolutionResponse(CategoricalResponse):

categories = [

Category(value="Resolved", description="The agent fully resolved the customer's issue"),

Category(value="Partially Resolved", description="The agent addressed part of the issue"),

Category(value="Unresolved", description="The agent did not resolve the issue"),

]

class ResolutionScorer(Judge[ResolutionResponse]):

async def score(self, data: Example) -> ResolutionResponse:

actual_output = data.get_property("actual_output")

if "resolved" in actual_output.lower():

return ResolutionResponse(

value="Resolved",

reason="The response indicates the issue was resolved."

)

return ResolutionResponse(

value="Unresolved",

reason="The response does not indicate resolution."

)Create a requirements file

Create a requirements.txt file with any dependencies your scorer needs. If you used judgeval scorer init with the --include-requirements flag to generate your judge, this file will already be created for you.

# Add any dependencies your scorer needs

# openai>=1.0.0

# numpy>=1.24.0Run Locally

Judge instances can be passed directly to evaluation.create().run() without uploading to the platform. Judges execute locally on your machine in parallel, and results are saved to your project on the Judgment platform.

from judgeval import Judgeval

from judgeval.data import Example

from judgeval.judges import Judge, BinaryResponse

class ResolutionScorer(Judge[BinaryResponse]):

async def score(self, data: Example) -> BinaryResponse:

actual_output = data["actual_output"]

if "package" in actual_output:

return BinaryResponse(

value=True,

reason="The response contains package information."

)

return BinaryResponse(

value=False,

reason="The response does not contain package information."

)

client = Judgeval(project_name="default_project")

examples = [

Example.create(

input="Where is my package?",

actual_output="Your package will arrive tomorrow at 10:00 AM."

),

Example.create(

input="Where is my package?",

actual_output="I don't know."

)

]

results = client.evaluation.create().run(

examples=examples,

scorers=[ResolutionScorer()],

eval_run_name="local_scoring_run"

)Upload Your Judge

Once you've implemented your Code Judge, upload it to Judgment using the CLI.

Set your credentials

Set your Judgment API key and organization ID as environment variables:

export JUDGMENT_API_KEY="your-api-key"

export JUDGMENT_ORG_ID="your-org-id"Upload the judge

The entrypoint file is the file that contains the scorer class definition. It should be passed as an argument to the judgeval scorer upload command:

judgeval scorer upload my_scorer.py -p my_projectYou can also include additional files or directories needed by your judge:

judgeval scorer upload my_scorer.py -i utils/ -i shared_helpers.py -p my_projectTo provide a requirements file and a custom name:

judgeval scorer upload my_scorer.py -r requirements.txt -n "Resolution Scorer" -p my_projectTo bump the major version of an existing judge:

judgeval scorer upload my_scorer.py -m -p my_projectCLI Options

entrypoint_pathrequired

:strPath to the entrypoint Python file containing your judge class.

--requirements, -r

:strPath to the requirements.txt file with dependencies.

--included-files, -i

:str (repeatable)Path to additional files or directories to bundle with your judge. If a directory is provided, all non-ignored files in that directory are included. Can be specified multiple times.

--name, -n

:strCustom name for the judge. Auto-detected from the class name if not provided.

--project, -p

:str--bump-major, -m

:flagBump the major version of the judge. If unspecified, the minor version will be bumped.

--yes, -y

:flagSet Environmental Variables

You can also set environmental variables for your Code Judge on the Judgment platform.

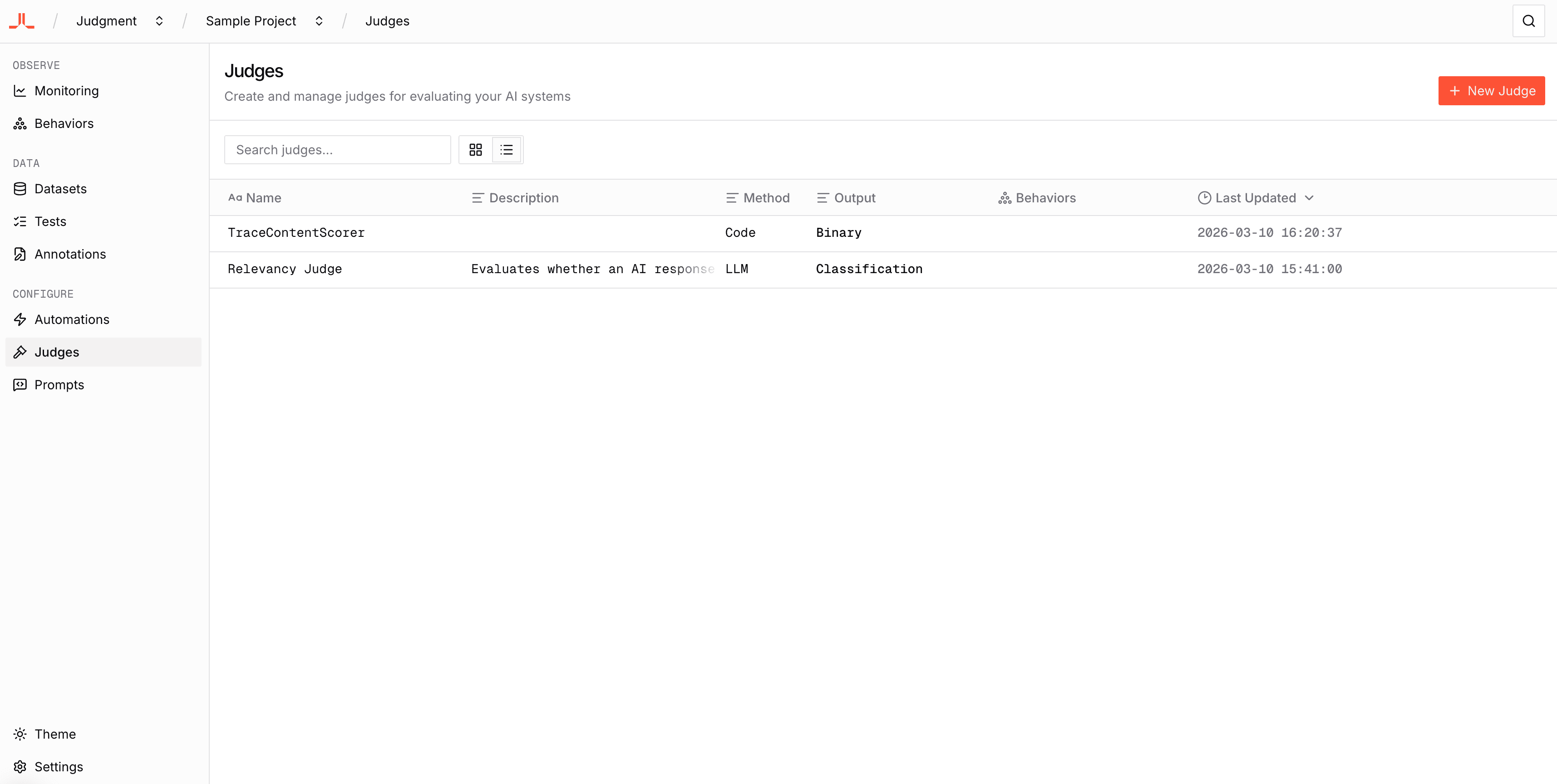

Navigate to the Judges page within your project.

Click on the Code Judge you would like to add the environmental variables for, then open the Environment Variables tab in the right-side panel of the judge detail page.

Enter in the environmental variable and click the "Add" button. Do this for all environmental variables needed for the Code Judge.

Use Your Uploaded Judge

After uploading, you can use your Code Judge in online evaluations by calling Tracer.async_evaluate() within a traced function. Pass the judge name as a string.

from judgeval import Tracer

Tracer.init(project_name="my_project")

@Tracer.observe(span_type="function")

def my_agent(question: str) -> str:

response = f"Your package will arrive tomorrow at 10:00 AM."

Tracer.async_evaluate(

judge="Resolution Scorer",

example={

"input": question,

"actual_output": response,

},

)

return response

result = my_agent("Where is my package?")